AI Governance

Most enterprise AI risk discussions focus on what enters the organization. Adversarial inputs. Synthetic media. Prompt injection. Model vulnerabilities. But in many restrictive enterprise environments, the more immediate risk flows in the opposite direction. Sensitive data leaving the organization through unsanctioned AI usage. When internal AI tools are too limited, too slow, or poorly aligned with real workflows, employees inevitably find workarounds. They copy proprietary data into public LLMs, upload regulated documents to external APIs, or install browser extensions that connect to AI services outside the organization’s visibility. This behavior is rarely malicious. It is almost always productivity-driven bypass. And it creates a governance challenge that traditional security models struggle to detect.

The Shadow AI Problem

Shadow AI refers to employees using AI tools outside approved enterprise systems. This often emerges when governance restrictions exceed the practical needs of the workforce.

Common scenarios include:

- A developer copying proprietary code into a public AI assistant

- A contractor exporting regulated documents to process locally with a model

- An analyst uploading client data to an external model for summarization

- A research team using personal API keys to access capabilities unavailable internally

From the user’s perspective, these actions are simply attempts to get work done faster.

From the organization’s perspective, they bypass:

- logging and monitoring

- contractual protections

- data processing agreements

- regulatory controls

This creates silent exposure without any obvious security event.

The Supply Chain Blind Spot

Shadow AI does not always originate from individual users. Many enterprise software platforms are now embedding AI features powered by external model providers.

Examples include:

- project management tools using external models for summarization

- CRM platforms generating reports through third-party AI APIs

- document systems routing content through external LLM providers

In many cases, organizations have limited visibility into these flows. Vendor AI disclosure should increasingly become a standard procurement requirement.

Why Traditional Security Controls Miss This

Most enterprise security programs are designed to detect malicious data exfiltration.

They assume patterns such as:

- large file transfers

- suspicious external connections

- abnormal user behavior

Shadow AI activity rarely fits that pattern. Instead, it looks like normal work.

Examples include:

- small document uploads

- routine copy-and-paste activity

- browser-based API usage

- cloud tool interactions during normal working hours

Traditional data loss prevention (DLP) systems tuned for large transfers often miss these behaviors entirely. This is why AI governance must address workflow incentives, not just perimeter controls.

Categories of Shadow AI Risk

Shadow AI introduces several categories of enterprise risk.

- IP Leakage: Employees may upload proprietary code, research, or internal designs into external AI systems.

- Regulatory Exposure: Sensitive or regulated data may be processed by vendors that are not authorized under compliance frameworks.

- Contract Violations: Data may be used outside the scope of client agreements or data processing agreements (DPAs).

- Model Contamination: Client data may inadvertently enter external model training pipelines.

- Audit Failure: AI-assisted outputs may lack traceability in regulated workflows.

These risks can arise even when employees believe they are simply using productivity tools.

Detection & Mitigation Mechanisms

Organizations do not need entirely new security infrastructure to address shadow AI risk. Most mitigation strategies rely on existing enterprise security capabilities applied to AI usage patterns.

DLP + CASB Monitoring

Cloud Access Security Brokers (CASB) are designed to monitor SaaS usage across enterprise networks. When combined with DLP systems, they can detect shadow AI patterns.

Examples include monitoring:

- outbound traffic to AI provider domains (OpenAI, Anthropic, Google, etc.)

- unusual file upload patterns

- personal API key usage from corporate networks

- repeated uploads of structured documents

- browser extensions interacting with model APIs

These controls already exist in most enterprise security stacks. The challenge is applying them to AI workflows.

Behavioral Anomaly Detection (UEBA)

User and Entity Behavior Analytics (UEBA) platforms can detect patterns associated with shadow AI adoption.

Typical indicators include:

- low adoption of internal AI tools combined with productivity spikes

- unusual outbound traffic to AI service domains

- teams reporting dissatisfaction with internal AI tools

- unusual copy-paste volumes detected at endpoints

Low adoption of sanctioned AI tools is itself a leading indicator of shadow AI risk.

Honeytokens & Canary Documents

Another powerful detection technique involves traceable markers embedded in sensitive documents.

These markers may include:

- unique string identifiers

- invisible watermark patterns

- embedded metadata hashes

- internal reference IDs

If these markers later appear in external outputs, public datasets, or third-party systems, the organization can trace the exfiltration path. This technique — widely used in cybersecurity — provides the kind of forensic evidence required for contractual enforcement.

Access Segmentation & Tool Gating

Access control remains an important mitigation layer.

Examples include:

- limiting contractor access to specific datasets

- requiring additional approvals for high-risk AI usage

- enforcing identity-linked model usage

- logging all AI interactions tied to authenticated users

These measures ensure that sensitive workflows remain observable and auditable.

The Governance Insight Most Companies Miss

The most counterintuitive finding in shadow AI governance is this: Tightening restrictions without improving internal AI tools often increases risk. When internal tools are slow, limited, or poorly integrated into workflows, employees will naturally look elsewhere. Shadow AI is often not a compliance failure. It is a productivity signal. Organizations should therefore ask a different question.

Not:

“How do we stop employees from using external AI tools?”

But:

“How do we make sanctioned AI the obvious and superior choice?”

When internal tools match external capabilities and integrate smoothly into workflows, bypass incentives drop dramatically.

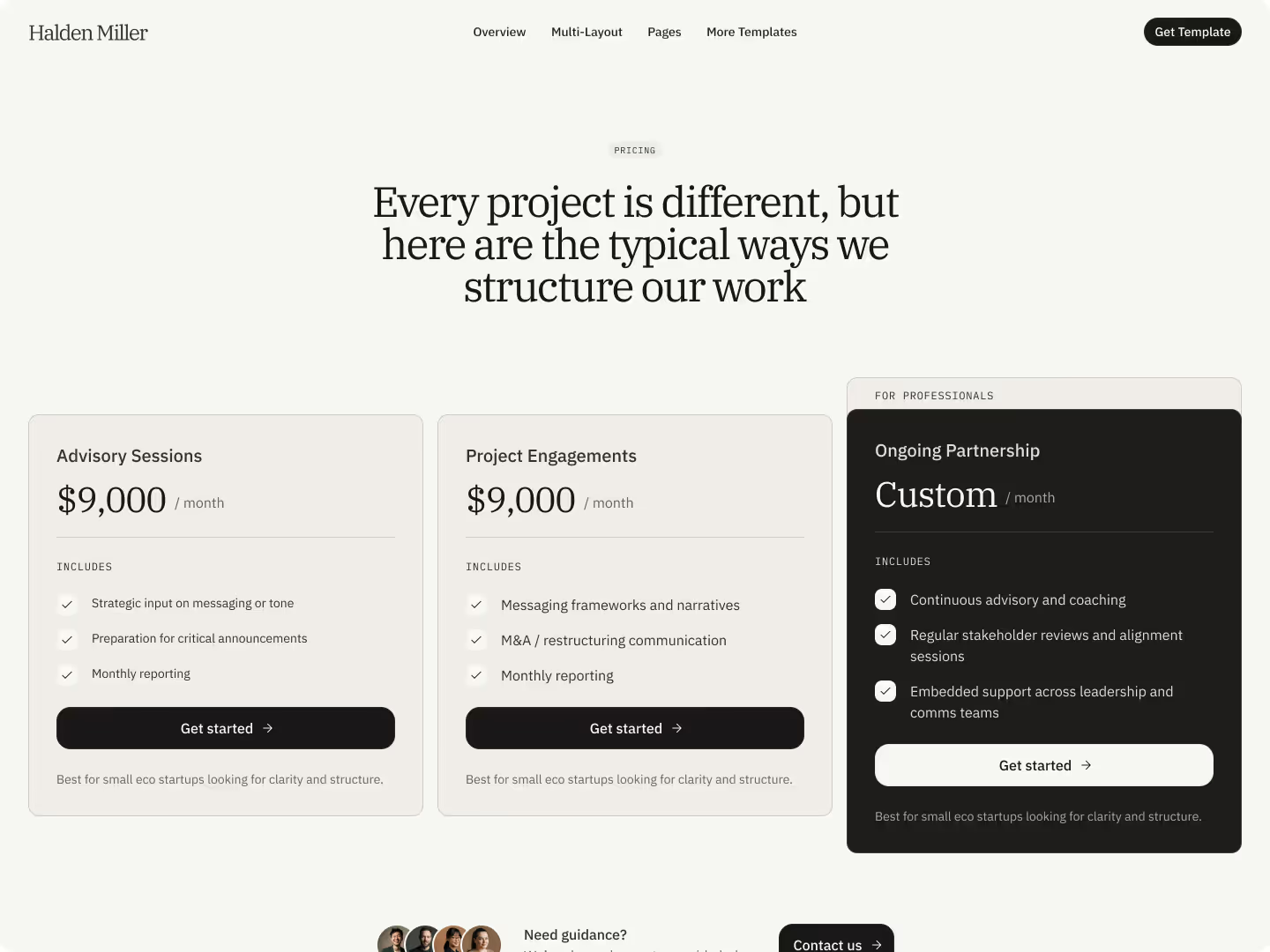

A Balanced Governance Framework

Effective enterprise AI governance requires multiple layers.

Layer 1 : Enablement

Provide high-quality internal AI tools that integrate directly into real workflows.

This includes:

- multimodal capabilities

- developer-friendly environments

- contractor-accessible systems

- workflow integration

Enablement reduces the incentive for shadow AI usage.

Layer 2: Monitoring

Organizations should monitor AI usage patterns across their environments.

This includes:

- CASB monitoring

- DLP integration

- outbound domain analysis

- internal AI adoption metrics

- vendor AI disclosure requirements

Layer 3: Contract Enforcement

Governance must enforce contractual boundaries.

This includes:

- vendor model training prohibitions

- tenant isolation

- data usage restrictions

- runtime policy enforcement

Layer 4: Auditability

Organizations must be able to demonstrate how AI systems are used.

This requires:

- immutable logging

- traceable AI-assisted outputs

- versioned governance policies

- audit-ready reporting aligned with frameworks such as:

- SOC 2

- HIPAA

- ISO/IEC 27001

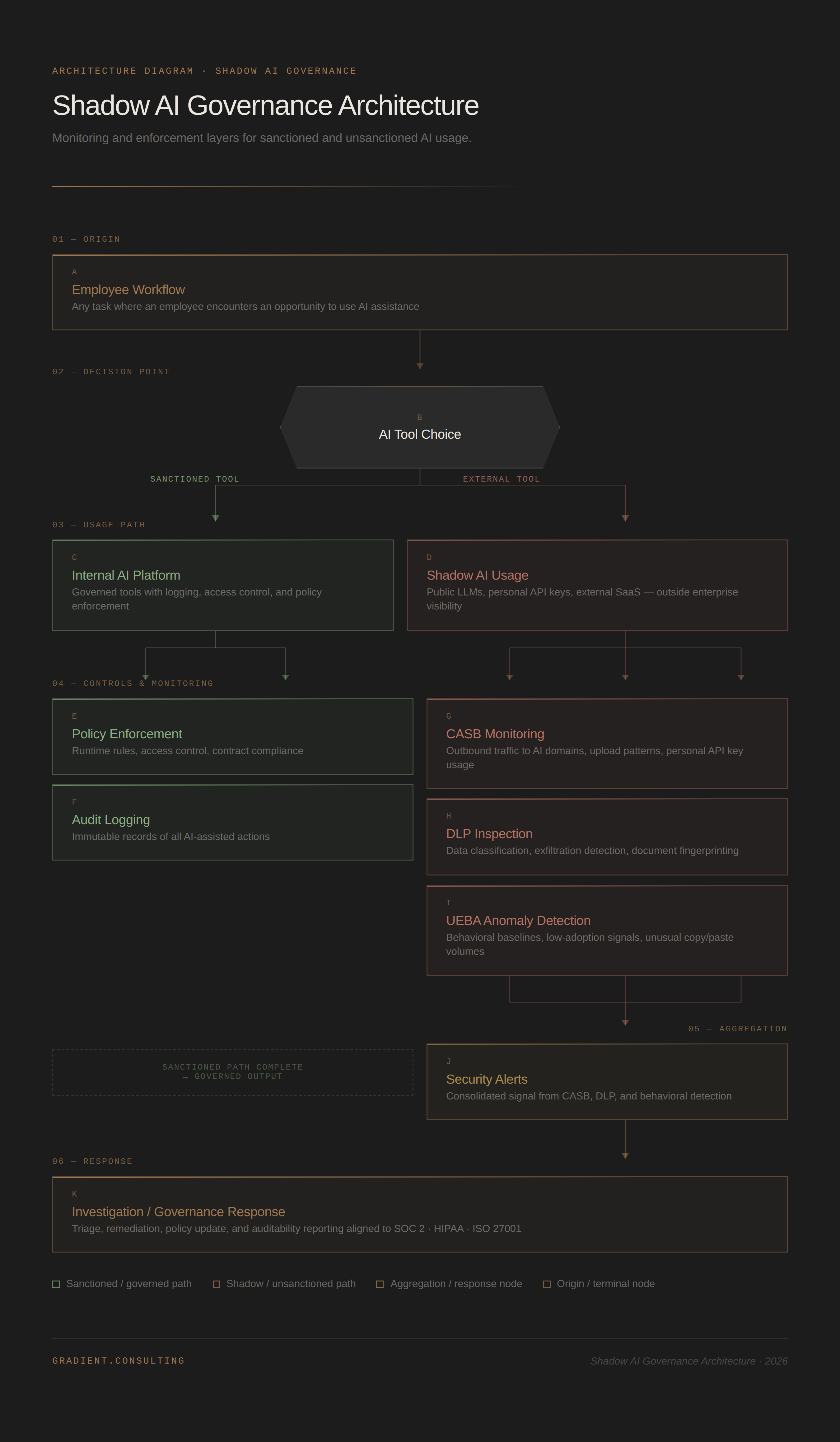

Shadow AI Governance Architecture

The following simplified architecture illustrates how monitoring and governance layers interact.

This architecture focuses on visibility and enforcement rather than total restriction.

Red Flags to Watch

Several signals often indicate governance misalignment.

These include:

- teams hiring external AI consultants despite internal tools

- departments purchasing AI SaaS outside procurement channels

- low adoption of internal AI platforms

- unexplained spikes in outbound traffic to AI providers

- contractors requesting local data exports

- AI-assisted outputs appearing with no internal usage logs

These signals usually indicate tooling or workflow gaps, not misconduct.

What This Means for Leaders

Shadow AI is rarely a security failure. It is usually a governance and enablement failure. Several implications follow.

1. Restriction alone increases bypass behavior

If internal AI tools do not match the capabilities of public models, employees will inevitably route around restrictions.

2. Visibility matters more than prohibition

Organizations should prioritize monitoring, traceability, and auditability over attempting to block all external AI usage.

3. Internal AI platforms must compete with public tools

Sanctioned tools must provide similar usability and capabilities to the models employees can access externally.

4. Procurement processes must include AI disclosure

Vendors embedding external AI models into their products create additional data exposure pathways.

5. Governance must account for human workflow incentives

The most secure systems are those where secure behavior is the path of least resistance.

TL;DR

- Shadow AI often arises from productivity-driven bypass, not malicious intent.

- Traditional security controls miss most shadow AI behavior.

- CASB, DLP, and behavioral monitoring can detect AI-related exfiltration patterns.

- Honeytokens provide forensic traceability for leaked documents.

- Governance must combine enablement, monitoring, enforcement, and auditability.

The most effective AI governance strategy is not:

Block + Assume Compliance

It is:

Enable + Monitor + Enforce + Audit

Organizations that make sanctioned AI tools the easiest option dramatically reduce shadow AI risk.