Why the Future of Synthetic Media Risk Is Provenance, Not Detection

Organizations are entering a world where seeing is no longer believing. Synthetic media — images, video, and audio generated by AI — is rapidly approaching photorealism. The visual artifacts that once made deepfakes easy to identify are disappearing. For enterprises, this creates a new category of operational risk. Fraud, impersonation, misinformation, and intellectual property misuse can now be produced at scale using generative systems. At the same time, organizations increasingly rely on AI pipelines that ingest, process, and redistribute large volumes of media. Most conversations about this problem focus on detecting fake content. But detection alone is not a durable strategy. The longer-term answer lies in provenance and governance — systems that verify where content comes from and enforce policies around how it can be used. This is where enterprise AI safety is heading.

The Core Argument

The future of AI safety is not about identifying what is fake. It is about proving what is genuine. Detection systems try to determine whether a piece of media was generated artificially. Provenance systems take a different approach. They attempt to verify where content originated and how it has been modified. This fundamentally changes the question.

Instead of asking:

“Is this fake?”

The system asks:

“Can we verify where this came from?”

As generative models improve, that becomes the more durable foundation for trust.

The Synthetic Media Risk Landscape

Synthetic media introduces several categories of enterprise risk:

- Deepfake impersonation

- Fraud and social engineering

- Misinformation amplification

- Biometric misuse

- Copyright and IP violations

- Contractual data misuse

Most AI safety discussions stop at identifying synthetic content. Enterprise systems need to go further.

They must govern the entire lifecycle of media assets:

- generation

- ingestion

- processing

- distribution

That requires both technical safeguards and policy enforcement infrastructure.

Synthetic Image Detection

Detection systems attempt to answer a simple question:

“Is this image synthetic?”

There are several common approaches.

Passive (Forensic) Detection

These techniques analyze the image itself for statistical inconsistencies. Examples include:

Artifact detection

- pixel-level inconsistencies

- GAN fingerprinting

- diffusion noise patterns

- lighting anomalies

Deepfake-specific signals

- facial warping artifacts

- eye-blink irregularities

- audio-visual desynchronization

- temporal instability

These approaches can work in the short term. But they are reactive by design. As generative models improve, the artifacts weaken. Detection becomes an ongoing arms race.

Model Fingerprinting

Another approach attempts to identify the statistical signature of specific model families. Examples include:

- GAN residual patterns

- diffusion noise fingerprints

- generator-specific statistical traces

This can work when the generating model is known. However, fingerprinting can be weakened through:

- fine-tuning

- regeneration

- post-processing

Active Defenses

Detection identifies synthetic media. Provenance verifies origin. That is a fundamentally stronger model.

Watermarking

Watermarks embed signals into generated images. They can be either visible or invisible.

Visible watermarking

- overlays

- logos

- labels

These provide transparency but are easily removed.

Invisible watermarking

- pixel-space embedding

- frequency-domain embedding

- diffusion watermarking

These methods are more robust, but they still remain vulnerable to:

- cropping

- regeneration

- screenshotting

Watermarks are helpful. They are not sufficient on their own.

Cryptographic Provenance

A stronger long-term approach focuses on verifying origin. This includes:

- content signing at generation

- public-key cryptography

- tamper-evident metadata

- device-backed signatures

- immutable content hashes

This shifts the problem from detection to trust infrastructure.

The Emerging Standard: C2PA

The leading standard for media provenance is C2PA (Coalition for Content Provenance and Authenticity).

C2PA is supported by organizations including:

- Adobe

- Microsoft

- BBC

It embeds cryptographically signed metadata that records:

- where content was created

- what tools modified it

- how it was distributed

C2PA support is increasingly appearing in:

- cameras

- editing software

- publishing platforms

For organizations building provenance infrastructure today, C2PA is the reference architecture.

Why Detection Alone Is Insufficient

As generative models approach photorealism:

- visual artifacts disappear

- detection models become adversarial targets

- synthetic content becomes statistically indistinguishable

This means detection will become less reliable over time. Regulators are moving in the same direction. The EU AI Act, for example, requires disclosure and watermarking for many forms of synthetic content. This shifts AI safety from a best practice to a compliance requirement.

The Missing Layer: Enterprise Governance

Even with detection and provenance, another layer is required.

Governance.

Synthetic media risks are not only technical problems. They are also legal and operational problems. Enterprise systems must enforce:

- contractual data rights

- licensing constraints

- regulatory obligations

- intellectual property ownership

- user permissions

This enforcement must occur before and after content enters the system.

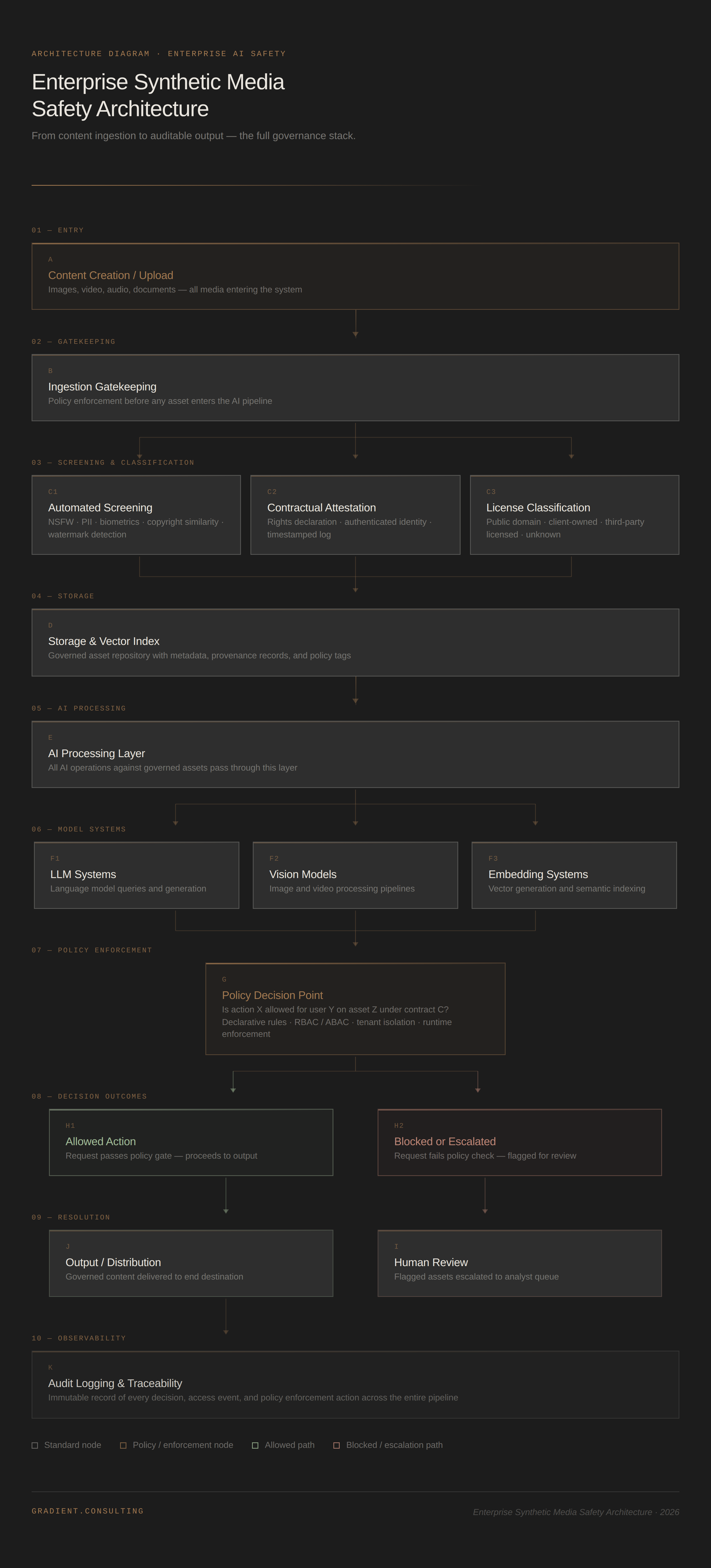

Ingestion Gatekeeping

Before content enters an AI system — storage systems, vector databases, or model pipelines — it should pass through a policy gate.

Contractual Attestation

At upload time:

- users attest they have rights to the asset

- the submission is tied to an authenticated identity

- the event is timestamped and logged

This provides:

- legal traceability

- auditability

- liability protection

Automated Screening

Systems should also perform automated checks during ingestion. Examples include:

- NSFW detection

- PII detection

- biometric detection

- copyright similarity detection (pHash/dHash)

- watermark detection

- public figure recognition

Flagged content may be:

- rejected

- restricted

- escalated to human review

License Classification

Assets should be classified according to usage rights. Examples include:

- public domain

- client-owned

- third-party licensed

- unknown

Usage policies should derive from these classifications.

Policy-as-Code Architecture

Once assets enter the system, governance must continue. This requires runtime policy enforcement. A typical architecture includes a centralized Policy Decision Point (PDP). Before actions such as:

- LLM queries

- embedding generation

- vision processing

- cross-tenant data access

- export or download

The system evaluates:

Is action X allowed for user Y on asset Z under contract C?

This requires:

- declarative policy rules

- role-based and attribute-based access control

- tenant isolation

- immutable logging

In effect, contract enforcement becomes part of system architecture.

Enterprise Synthetic Media Safety Architecture

Below is a simplified architecture illustrating the layers required for enterprise-grade AI safety.

This architecture combines technical safeguards, governance, and policy enforcement into a single operating model.

The Strategic Direction

Long-term AI safety will not be won through better artifact detection. It will emerge from infrastructure. Specifically:

- cryptographic provenance systems

- policy-aware runtime enforcement

- contract-bound governance

- enterprise auditability

Detection is tactical. Governance is structural. And regulatory frameworks are increasingly pushing organizations in that direction.

What This Means for Leaders

Organizations building or adopting AI systems that process media, documents, or user-generated content should think about safety and governance as infrastructure decisions, not afterthoughts. Several implications follow.

Detection alone will not solve synthetic media risk

Most current solutions focus on identifying fake content after it appears. As generative models improve, that approach will become less reliable. Organizations should prioritize verifiable provenance systems rather than relying solely on forensic detection.

Provenance will become part of compliance

Emerging regulations such as the EU AI Act already require disclosure and labeling of synthetic content. Standards like C2PA are likely to become foundational components of enterprise AI infrastructure.

Governance must be built into AI systems

Enterprises should treat AI systems the same way they treat financial systems or identity systems — with built-in policy enforcement, traceability, and auditability. In practice, that means implementing:

- ingestion controls

- license classification

- policy enforcement layers

- audit logging

Policy enforcement must happen at runtime

Governance cannot rely on static policies or manual oversight alone. Enterprise AI systems must enforce rules dynamically, every time content is processed, accessed, or distributed.

AI safety is becoming an architecture problem

The strongest solutions combine:

- cryptographic provenance

- ingestion controls

- policy-as-code governance

- human oversight workflows

Organizations that treat AI safety as a system architecture challenge — rather than only a model safety challenge — will be far better positioned as synthetic media risk and regulation continue to evolve.

TL;DR

- Artifact-based deepfake detection is temporary

- Watermarking helps, but it can be removed

- Cryptographic provenance (such as C2PA) provides stronger guarantees

- AI systems should enforce ingestion gatekeeping

- Contractual data rights must be enforced at runtime

- Policy-as-code architecture is essential

- Governance is quickly becoming a regulatory requirement

The future of AI safety is not about identifying what is fake. It is about proving what is genuine.